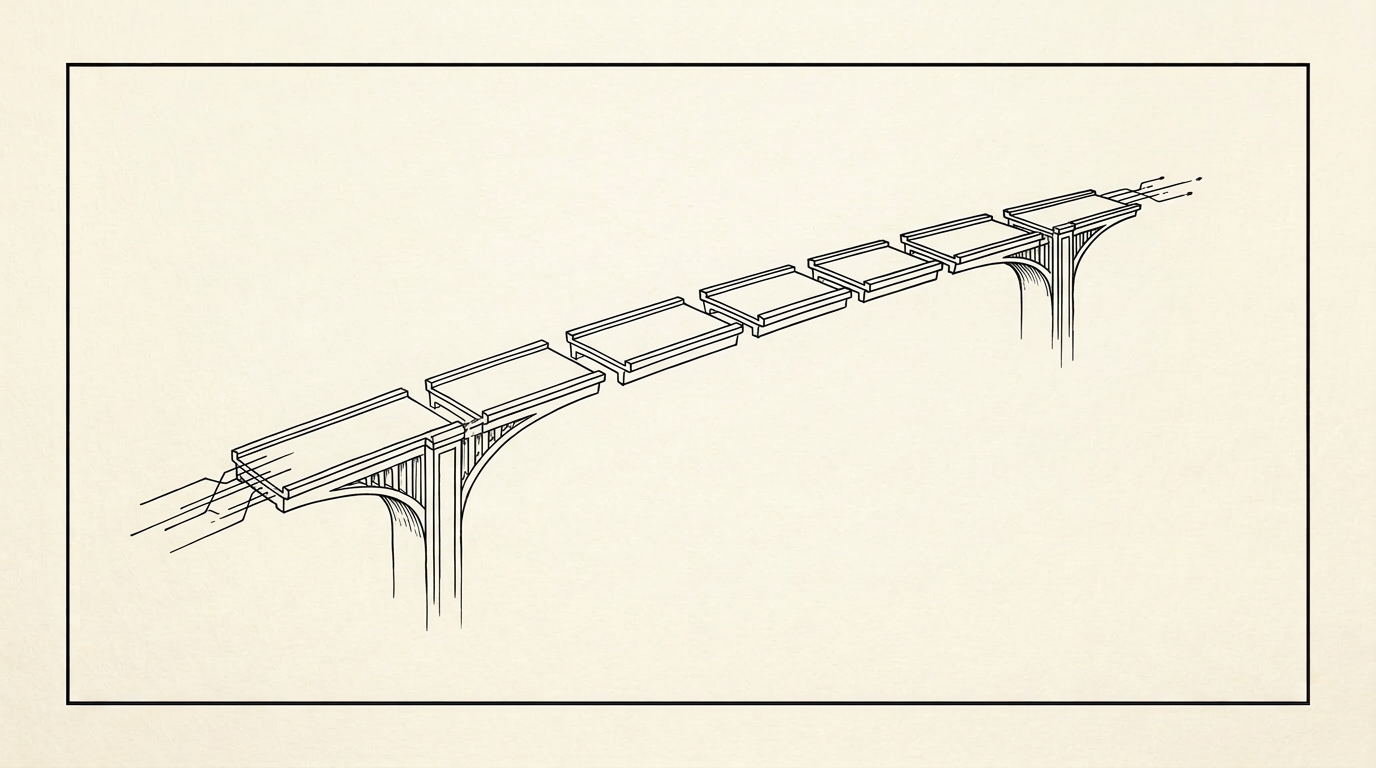

AI pipelines fail because we build them like Rube Goldberg machines.

The math is brutal. A 6-stage pipeline with 80% reliability per stage? 0.8^6 = 26% overall success rate. Three out of four runs fail completely. The compound probability of multi-stage systems will destroy you faster than bad prompts ever will.

I learned this building a content generation system last month. Six stages, each one solid in isolation:

- Extract themes from source material — 85%

- Generate outline — 82%

- Write introduction — 88%

- Develop body content — 79%

- Write conclusion — 84%

- Format and optimize — 81%

Every stage passed its unit tests. The pipeline as a whole failed more than it succeeded.

My instinct was to add retry logic. Better error handling. Fallback prompts. More engineering to solve what felt like an engineering problem.

That instinct was dead wrong.

Stop adding stages. Start removing them.

The fix isn't better prompting or sophisticated error handling. It's architectural. Collapse six stages into three.

| Before (6 stages, 26% success) | After (3 stages, 85.7% success) |

|---|---|

| Extract themes | Extraction + structure in one call |

| Generate outline | |

| Write intro | Full content generation with formatting rules baked in |

| Develop body | |

| Write conclusion | |

| Format and optimize | Validation + polish |

Three stages at 95% each: 0.95^3 = 85.7%. Same input, same output, three times more reliable.

The key move was making each stage do more work inside a single, well-structured prompt. One prompt that handles extraction AND outlining is dramatically more reliable than two prompts that need to agree on an intermediate format between them.

The key insight

It's easier to make one AI call 95% reliable than to make six AI calls 95% reliable each. Consolidation beats optimization every time you're dealing with compound probability.

Stage compatibility is the real killer

Stage compatibility kills more pipelines than anything else. This is the failure mode you won't catch testing stages in isolation:

- Stage 2 expects JSON but stage 1 occasionally outputs markdown

- Stage 4 needs specific key names that stage 3 randomly renames

- Any single format hiccup cascades through the entire chain

I started logging every failure with the actual input/output pairs. Not "stage four failed" — the full payload it received, what it produced, what it should have produced. That dataset was what actually fixed my pipeline. Not prompt engineering. Not temperature tweaking. Understanding the interaction effects between stages.

When stage three works perfectly with output format A from stage two, but chokes on format B — which stage two produces 15% of the time — you have a 15% failure rate that's invisible in unit tests. Multiply that across six stages and you're done.

Context persistence fixes consistency

Single good outputs are easy. Any developer can get a model to write one decent paragraph. The hard problem is getting consistent outputs across multiple stages that feel like they came from one brain instead of six different AI personalities.

My fix: a shared context object that travels through the entire pipeline. Not just the content — the decisions. The tone established in stage one, the structure locked in during stage two, the terminology choices. Every stage reads this context, does its work, updates it.

{

content: "...",

decisions: {

tone: "technical-but-accessible",

structure: "problem-solution-proof",

terminology: { "pipeline": "pipeline", "stage": "stage" }

},

quality: {

voice_score: 0.92,

coherence: "high"

}

}The result is outputs that read as coherent instead of stitched together.

The math determines your architecture

The difference between a system that works and one that doesn't isn't the quality of individual components. It's the number of failure points you create.

Stop thinking about this as an engineering problem. It's arithmetic:

- Need 90% overall? Max two stages at 95% each, or three at 97%.

- Need 95% overall? One stage at 95%, or two at 97.5%.

- Need 99%? Good luck with anything more than one stage.

Design your architecture around these constraints first. Then figure out what each stage needs to do. Not the other way around.

The real engineering challenge isn't making each stage perfect. It's designing systems with the minimum stages required to get the job done.

Your pipeline isn't failing because your prompts are bad. It's failing because you're multiplying failure probabilities and calling it architecture. Simplify. Consolidate. Let the math work for you.

Building an AI pipeline that keeps breaking? Let's architect one that actually works.